Networking meetings, events

and webinarsIntranet, digital workplaces, Digital Employee Experience, internal communications,

AI, Microsoft 365 and Knowledge Management

Find your networking group

Upcoming webinars

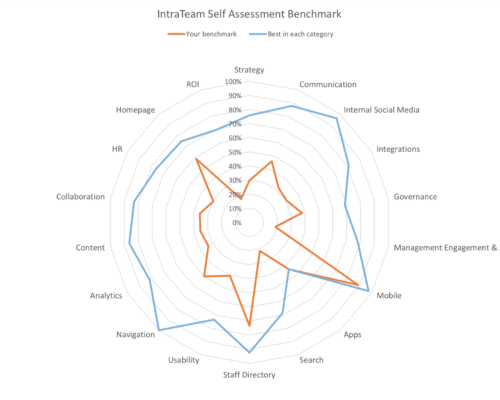

Benchmark your intranet / the digital workplace

Benchmark

With the self-assessment benchmark, you can measure how much of the potential of intranet/digital workplace you exploit. We give you an overall score and compare your score in 18-19 categories with the best in each category. We can also compare you with the best in each category in your industry or similar organizations.

Read moreI just love this dollop of insights once a week. Thanks Kurt!

IntraTeamNews has become a must-read for me, and honestly, not many newsletters in my inbox achieves that 😉. It’s a very good and informative newsletter.

I love receiving IntraTeamNews!

I look forward to every issue of IntraTeamNews. It sparks ideas as to how I can solve issues or deliver solutions within my own organization – every time.

I really enjoy the weekly IntraTeamNews.

IntraTeam newsletter is the best way to be on top of the digital workplace news, strategies, events and tools. A weekly must!

I love the newsletters!

I’m looking forward to your newsletters, they always contain nuggets of interesting information.

I subscribe to your excellent newsletter, it is by far the most interesting newsletter I know of, so I read it with great interest every week.

Our team

Contact us

Do you have any questions?